As science journalists who take our job seriously, we’ve learned a couple of rules by heart: never present a correlation as a causation, always check whether a sample is representative and never rely on a single study. As the expression goes: one swallow doesn’t make a summer.

These are all good starting points. But they are far from making us unimpeachable in our reporting.

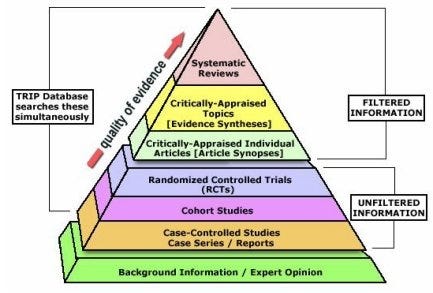

As a result of the third principle, we tend to rely on review studies. More specifically: systematic reviews and meta-analyses. A systematic review is, simply said, a review of the scientific literature on a particular research question, performed in a systematic way to reduce bias. Sometimes a reviewer will just include randomized clinical trials, sometimes other studies as well. A meta-analysis is a statistical method to combine the results of several studies and come to a single result and conclusion. It is often the final piece of a systematic review.

But there is something strange going on here. While we at least try to scrutinize the methods and limitations of all those single studies, we rarely do the same with systematic reviews and meta-analyses. Since they are regarded as the gold standard of empirical science, on top of the ‘pyramid of evidence’, we take the results and conclusions for granted and regard them as objective debate enders.

Read the full article: What science reporters should know about meta-analyses before covering them

Appreciate this article and my work? You can now donate and keep me busy.

If you like this article and want to show it by giving a small (or big) donation, that’ possible. This way, you’re helping independent (science) journalism to maintain and flourish.